You start with one paper you’re genuinely excited about. Then another. Then a citation trail opens up, and suddenly you’re surrounded by PDFs, half-read abstracts, and a troubling feeling that you’re already behind.

At some point in most projects, the literature review starts to feel heavier than the research itself. That doesn’t mean you’re doing it wrong. It reflects how research has changed.

The challenge today isn’t always access. Often it's the scale.

You’re not sorting through dozens of papers anymore. You’re navigating thousands and they don’t all live in one place. New studies appear every day, terminology shifts, and debates evolve while you’re still learning the basics. A modern literature review isn’t a straight path. It’s a system you learn to move through.

You might have heard the phrase literature review workflow more often lately. Having a clear process for managing your review, and knowing which tools support each step, can dramatically reduce the overwhelm.

What is a modern literature review workflow?

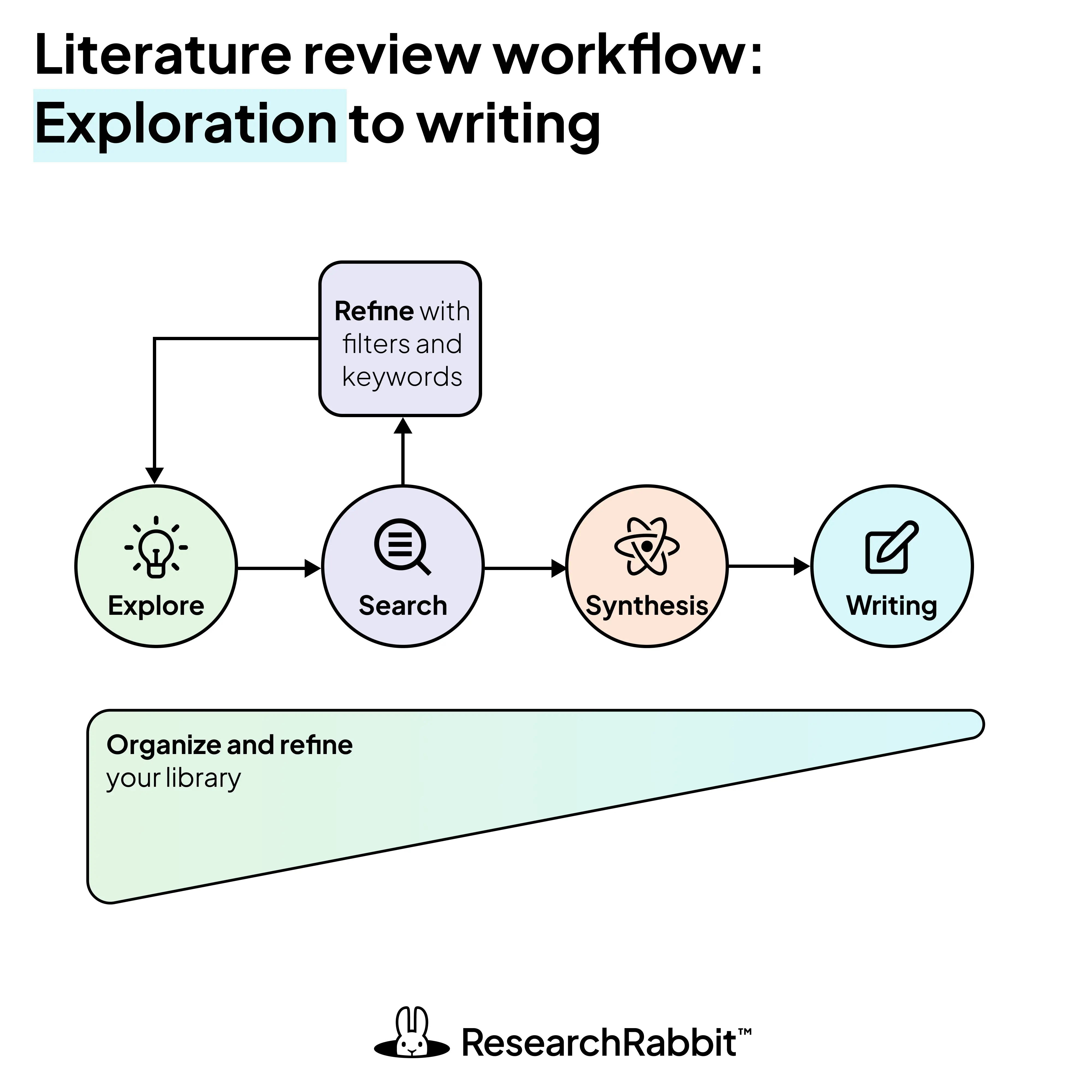

A modern literature review workflow combines exploratory discovery, structured database search, organized synthesis, and integrated writing to help researchers navigate large and rapidly evolving bodies of literature. Instead of a linear process, most researchers repeatedly revisit these steps. This iterative approach allows for a progressively clearer understanding of the field.

The real challenges researchers face today

Before getting into specific tools, it helps to name the two structural problems that make modern literature reviews difficult, and where planning your workflow can make it easier.

The volume problem → solved with thoughtful filtering

Every year, PubMed alone adds more than a million new citations. Across disciplines, the growth is similar. You’re not missing something by feeling overwhelmed. The ecosystem has simply expanded beyond what any one person can track manually.

The challenge isn’t finding papers. It’s filtering meaningfully. Without structure, a literature review can slip into survival mode. You chase citations, reread abstracts you’ve seen before, and wonder whether you missed something important.

Modern tools can support this process through:

- Relevance ranking that surfaces what matters most

- Citation-based recommendations that learn from what you read

- Topic clustering that shows you the shape of a field

- AI-assisted screening that flags papers worth your attention

The silo problem → solved with structured search

Research doesn’t live in one place. It’s scattered across PubMed, Scopus, Web of Science, arXiv, preprint servers, and institutional repositories. Each database uses different indexing systems, keywords, and coverage.

Managing this fragmentation isn’t about finding one perfect tool. It’s about building connections between them. Researchers who navigate this well often combine:

- Broad discovery platforms like Google Scholar and Semantic Scholar

- Citation-based mapping tools like ResearchRabbit and Litmaps

- Traditional database searches with refined queries

- Reference managers that centralize everything in one library

The goal isn’t to rely on a single tool. It’s to create a connected ecosystem where papers flow from discovery to organization to writing with minimal friction.

The modern literature review workflow

Most experienced researchers don’t move through a literature review in a straight line. Instead, you cycle through overlapping phases, refining your understanding each time. The process is iterative, more like walking a spiral where each pass brings deeper clarity.

For a deeper look at how structured search and exploratory discovery work together, see our guide to Search vs. Discovery in Literature Reviews.

Phase 1: Exploratory mapping (discovery first)

Before locking into keywords and filtering, it helps to start with exploration.

Instead of asking, “What should I search?” try asking, “What does this field actually look like?”

Sometimes the easiest way into a field is through one paper that clearly matters. Visual discovery tools like Litmaps and ResearchRabbit let you start with a seed paper and expand outward through citation networks, following how ideas connect across the literature.

At this stage it also helps to set up a reference manager (such as Zotero) so you can save useful papers as you explore.

Patterns emerge quickly:

- Foundational works that anchor the field

- Influential authors whose names appear across multiple papers

- Emerging clusters where new conversations are forming

- Competing schools of thought that use different terms for related ideas

This phase reduces blind searching. You’re learning the language of the field before committing to formal search strings. Discovery builds intuition. Search builds rigor. You need both.

Phase 2: Filtering and structured search

Once the landscape feels familiar, filtering and searching becomes more deliberate. This is also where transparency and reproducibility start to matter for a systematic review.

Approaches to filtering could include:

- Reading abstracts to see how they fit with your research priorities

- Sourcing foundational articles

- Using AI tools to help extract specific criteria in an evaluation matrix

- Filtering by journal ranking, publication dates, or citation counts

Modern practice for systematic reviews often includes:

- Boolean search strings refined through trial runs

- Controlled vocabulary like MeSH terms adapted for each database

- Forward and backward citation tracking from key papers

- Search alerts for new publications that match your criteria

- Documented protocols that you can return to later

Many researchers maintain evolving search strategies that adapt as understanding deepens. This approach is especially useful for systematic reviews, meta-analyses, or projects where methods need to be traceable. Rigor doesn’t mean rigidity. It means you can retrace your steps.

Phase 3: Organization and synthesis

Reading is rarely the bottleneck anymore. Organization is.

Researchers now rely on layered systems that go beyond simple folders.

Smart reference managers like Zotero, Mendeley, EndNote, and Paperpile do more than store PDFs. They deduplicate records imported from multiple databases, sync across devices, enable group collaboration, and integrate directly into writing tools. They turn a collection of files into a working library.

But storage alone isn’t enough.

Networked note-taking tools like Obsidian and Roam Research help your ideas connect across themes, methods, and debates. Instead of isolated summaries, your notes link through bidirectional connections. When you can see how a methodology in one paper connects to a finding in another, patterns start to emerge. This is where synthesis begins to feel possible.

For larger projects, structured review platforms like Covidence and Rayyan add another layer. They support abstract screening, inclusion tracking, team collaboration, and audit trails. AI can assist with prescreening and deduplication, saving time while keeping human judgment central.

Phase 4: Writing with integrated citation

Modern writing environments no longer sit separate from literature management.

You can write in Overleaf with BibTeX integration, Google Docs with citation plugins, or Scrivener for longer projects. Citations update automatically. Libraries sync in real time. References stay consistent as drafts evolve.

This integration reduces cognitive load. You don’t lose momentum switching between reading and writing. You stay focused on argument and insight rather than formatting and administration.

What actually makes modern literature reviews effective?

Not the number of tools. Not the most advanced AI. What matters is the combination of:

- Discovery before rigid search

- Systematic documentation

- Networked organization

- Iterative refinement

- Critical engagement with conflicting evidence

The most effective researchers think in networks, not lists. You revisit papers as new questions emerge. You follow connections between ideas. Over time, the literature review becomes your evolving map of the research field rather than a requirement to complete.

When to use generative AI tools (and when not to)

The modern workflow tools available for your literature review can help to improve your search and reduce the feelings of being overwhelmed. Including AI is a big part of this.

Large language models (LLMs) can play different roles throughout a literature review. The key is thinking about what you want them to help with at each stage. During exploration, generative AI can help you navigate the volume and silo problems by surfacing relevant papers, highlighting patterns, and pointing you toward promising directions.

But the core work of reading and interpreting the literature still matters. If AI does all the summarizing, you may save time, but you might also miss the insights that shape your thinking as a researcher.

The table below draws on the following tutorials, which are well worth watching if you’d like to learn how generative AI tools can support different stages of your research workflow:

- Andy Stapleton’s How to do a literature review (stress-free!) Watch →

- Ilya Shabanov in Paperpal’s Master your literature review workflow: From analysis to writing Watch →

From overload to pattern recognition

If literature reviews feel harder now than they once did, that isn’t a sign you’re falling behind. The research environment itself has changed. There is more work, more noise, and more pressure to see the landscape clearly.

Modern literature review workflows help you shift out of pure accumulation and into pattern recognition. It’s the move from scattered PDFs to connected thinking, from reactive searching to more intentional navigation.

Some newer tools are built to surface relationships across papers so those patterns become easier to notice. But the real clarity still comes from how you think about the field and how your questions evolve over time.

👉 If you're ready to move from scattered PDFs to a clearer map of your field, start with one key paper in ResearchRabbit, or import your existing library to map your research landscape.

%20(800%20x%201036%20px).webp)

_cover.webp)

This is a big test comment on your article.